JSON Parser Benchmarking

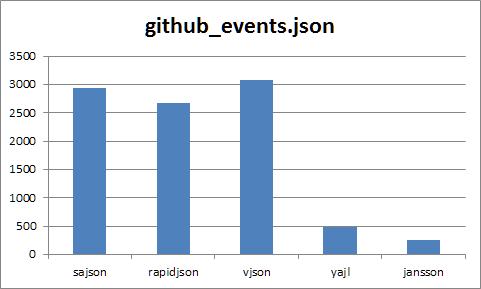

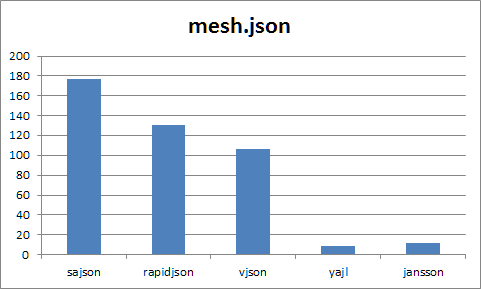

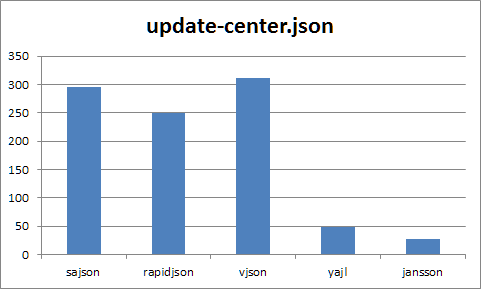

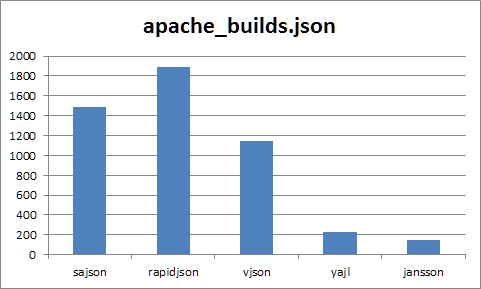

With the caveat that each parser provides different functionality and access to the resulting parse tree, I benchmarked sajson, rapidjson, vjson, YAJL, and Jansson. My methodology was simple: given large-ish real-world JSON files, parse them as many times as possible in one second. Â To include the cost of reading the parse tree in the benchmark, I then iterated over the entire document and summed the number of strings, numbers, object values, array elements, etc.

The documents are:

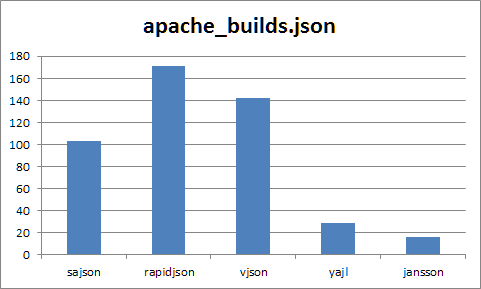

- apache_builds.json: Data from the Apache Jenkins installation. Mostly it's a array of three-element objects, with string keys and string values.

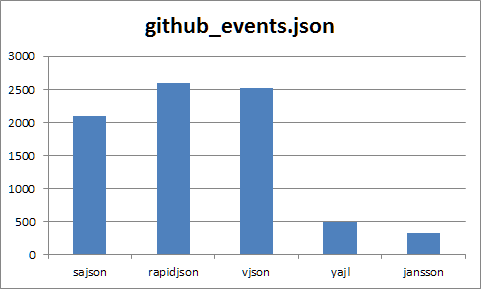

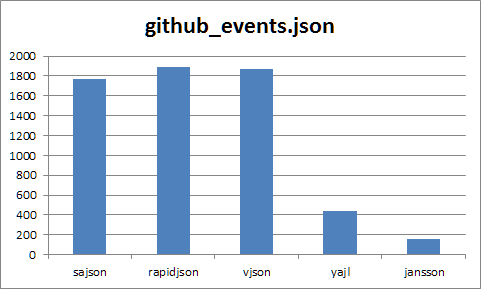

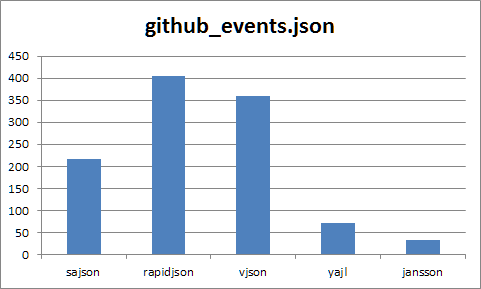

- github_events.json: JSON data from GitHub's events feature. Nested objects several levels deep, mostly strings, but also contains booleans, nulls, and numbers.

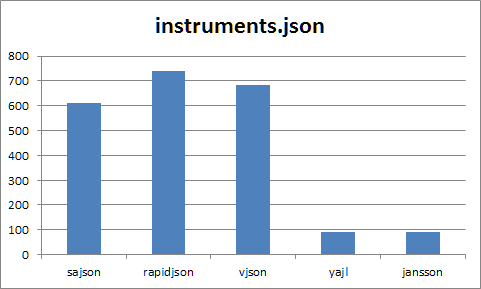

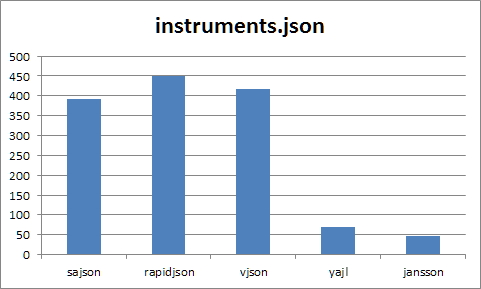

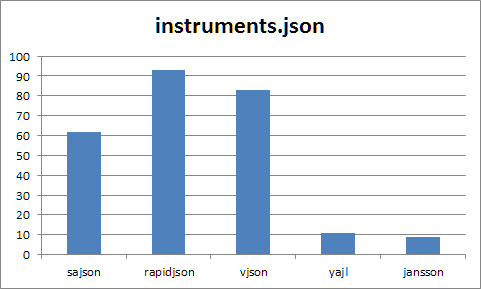

- instruments.json: A very long array of many-key objects.

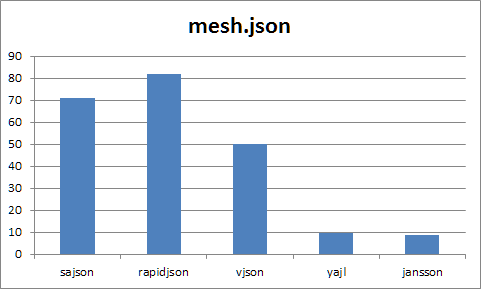

- mesh.json: 3D mesh data. Almost entirely consists of floating point numbers.

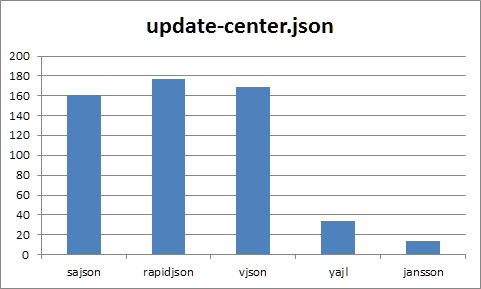

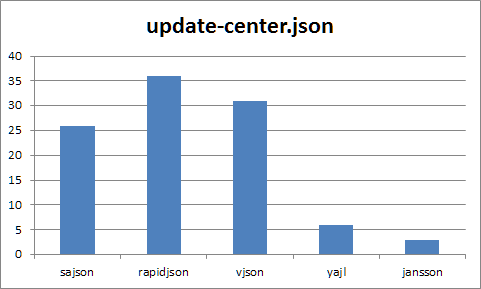

- update-center.json: Also from Jenkins though I'm not sure where I found it.

apache_builds.json, github_events.json, and instruments.json are pretty-printed with a great deal of interelement whitespace.

Now for the results. The Y axis is parses per second. Thus, higher is faster.

Core 2 Duo E6850, Windows 7, Visual Studio 2010, x86

Core 2 Duo E6850, Windows 7, Visual Studio 2010, AMD64

Atom D2700, Ubuntu 12.04, clang 3.0, AMD64

Raspberry Pi

Conclusions

sajson compares favorably to rapidjson and vjson, all of which stomp the C-based YAJL and Jansson parsers. 64-bit builds are noticeably faster: presumably because the additional registers are helpful. Raspberry Pis are slow. :)

Interesting! Would you mind sharing your sample apps? I wrote a json parser (https://github.com/mfascia/minja) and I would be interested in comparing its speed with these "reference" parsers. Thank you!

I was looking at a resource-constrained environment ( embedded MCU ) parser, found rapidjson and sajson and vjson, but i still dont like allocations at all.

so i came across jsmn ( http://zserge.bitbucket.org/jsmn.html ) and ultaminimal js0n ( https://github.com/quartzjer/js0n ). I'm not particularly interested in speed but robustness and stability, but for kicks if you still have your benchmark setup code around i would rerun with these included - and im mostly interested in running on modern ARM-CortexM's clocked at less than 100mhz : )

Hi kert,

I did consider js0n but it wasn't what I was looking for in that the generated "parse tree" isn't complete. sajson is a similar concept (single-pass, fills one array) but the resulting parse tree is actually useful.

I was not aware of jsmn at the time, thanks! If I update the benchmarks I'll add jsmn: it looks pretty reasonable.

Thanks, Chad

If you did an update, please include jvar (referenced on json.org)--a new C++ parser which uses JS style programming model in C++ and is quite fast.