View-Independent Translucent Triangle Sorting

Given a large, potentially self-intersecting, translucent, two-sided triangle mesh, we'd like to render it with as few visual artifacts as possible. The issue is that OpenGL and Direct3D will render triangles in exactly the order they're given, and since alpha blending is not commutative, alpha-blended triangles must be rendered back to front.

My colleague, Jon Watte, pointed me to an article he'd written that describes an O(n^2) algorithm that splits all double-sided triangles into two, which are then sorted based on a coverage factor: how many other triangles "shadow" this one. Triangles that are farther back are sorted lower in the list than triangles in front of them. This algorithm is view-independent.

My understanding is that Torque Engine and Unreal Engine implement similar algorithms.

The idea works well in practice, but O(n^2) is too slow for large meshes, especially approaching the 100,000 triangle mark.

I set out to see if we could get similar results from an algorithm with subquadratic or even linear-ish running time.

The basic idea is, if we can define a strict weak ordering on triangles, then we can run a standard sort algorithm across the triangles and have a perfectly-ordered, view-independent, translucent mesh.

What does it mean to order two translucent triangles in a mesh?

bool compare(Triangle A, Triangle B) {

a_behind_b = all points in A behind B

b_behind_a = all points in B behind A

if a_behind_b and b_behind_a: return false

if !(a_behind_b or b_behind_a): return false

if a_behind_b: return true

if b_behind_a: return false

// some kind of weighted area comparison

}

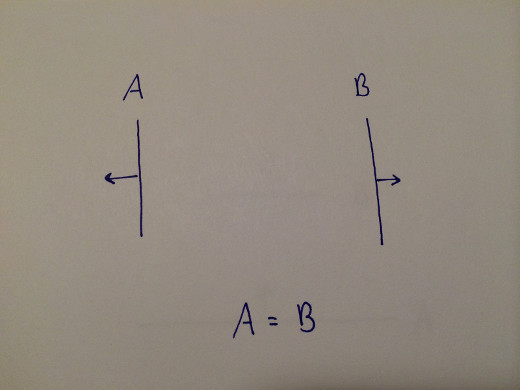

If two triangles are facing away from each other -- that is, each is behind the other -- then the order in which they're rendered does not matter.

A = B.

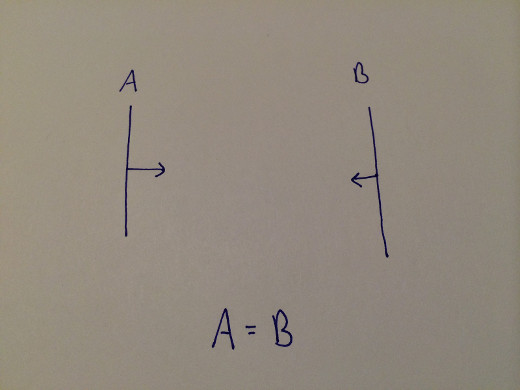

If two triangles face each other, their order also does not matter.

Again, here, A = B.

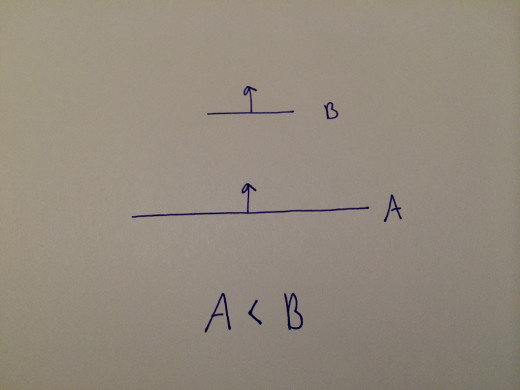

If two triangles point the same direction, but triangle A is in front of triangle B, then A should always be rendered before B.

A < B.

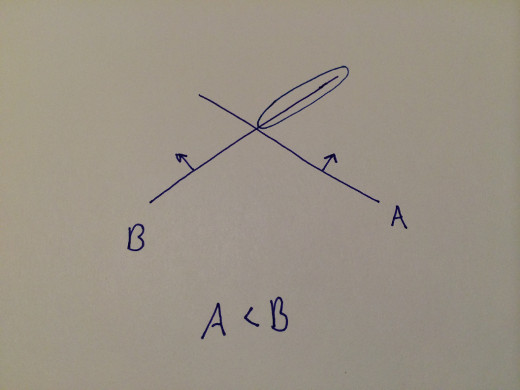

If two triangles intersect, without splitting, there is no correct rendering order. We'd like to sort them by each's coverage of the other, which can be calculated by each triangle's plane with the other triangle.

In this case, A < B because B covers A more than A covers B.

So far we have a strict weak ordering. However, even ignoring the possibility of intersecting triangles, there's a problem. Strict weak ordering implies and requires transitivity. (Indeed, that's how O(n lg n) comparison sorting algorithms work.) However, consider the following three triangles, A, B, and C.

A > B B > C C > A

Without splitting, there is no ordering that can render this mesh correctly from all viewpoints.

Rats, this means our triangle comparison does not implement a strict weak ordering. What if we passed it to std::sort or std::stable_sort anyway? Undefined behavior, according to my reading of the C++ 2003 spec. But let's say we implemented a merge sort without undefined behavior if given a non-strict-weak ordering. What's the worst ordering that could happen?

Given that comparison sorts rely on transitivity, consider our failure case from before, this time with a few extra "easy" triangles (labeled X and Y) thrown in.

The sort may compare A>B and B>C, assuming "A>C". Then, worst case, given any triangle X where X>A, "X>C" which could be incorrect. In addition, given any triangle Y where C>Y, "A>Y" which could also be incorrect. The circled Xs and Ys in the diagram above indicate incorrectly-ordered triangles if the sort assumes "A>C". That is, even the smallest cycle could result in several triangles being sorted incorrectly. A possible sort order would be: YYYCBAXXX, even though, ideally, one of the Ys > A and and two of the Xs < C.

A body of research named consensus clustering or rank aggregation explores approximating the optimal ordering given conflicting constraints. The goal is to minimize conflicting orderings. An optimal solution is NP-complete, but I propose the following stochastic solution, where k and j are tunable constants:

for k iterations:

shuffle triangle list

merge sort

pick j random pairs of triangles, count number of pairs where (second < first)

keep ordering that minimizes incorrect orderings

This algorithm runs in O(k*(n+j)) -- better than quadratic, which was the stated goal. Also, given sufficient iterations, stochastic algorithms tend to avoid worst-case scenarios, which is good enough here.

Now, if we could find cycles in subquadratic time, we could break them by selectively splitting triangles or by removing cycles entirely from the mesh and merging them separately, once the strict weak ordering on non-cycles is determined. Cycle detection is O(E+V) in general, but total ordering implies E=V^2.

Future work: Could we use the fact that some triangles can be rendered in any order relative to other triangles to optimize vertex cache hits?

Thanks to a bunch of people who explored this problem with me.